| 2022 |

Mickael Sereno, Stéphane Gosset, Lonni Besançon, and Tobias Isenberg. |

"Hybrid Touch/Tangible Spatial Selection in Augmented Reality." Computer Graphics Forum, 41(3), June 2022. To appear |

|

|

|

|

|

| 2022 |

Xiyao Wang, Lonni Besançon, Mehdi Ammi, Tobias Isenberg |

"Understanding differences between combinations of 2D and 3D input and output devices for 3D data visualization" International Journal of Human-Computer Studies, Elsevier, 2022, 163, pp.102820. |

|

|

|

|

|

| 2022 |

Lonni Besançon, Elisabeth Bik , James Heathers , Gideon Meyerowitz-Katz |

"Correction of scientific literature: Too little, too late!" PLOS Biology 20(3): e3001572 |

|

|

|

|

|

| 2022 |

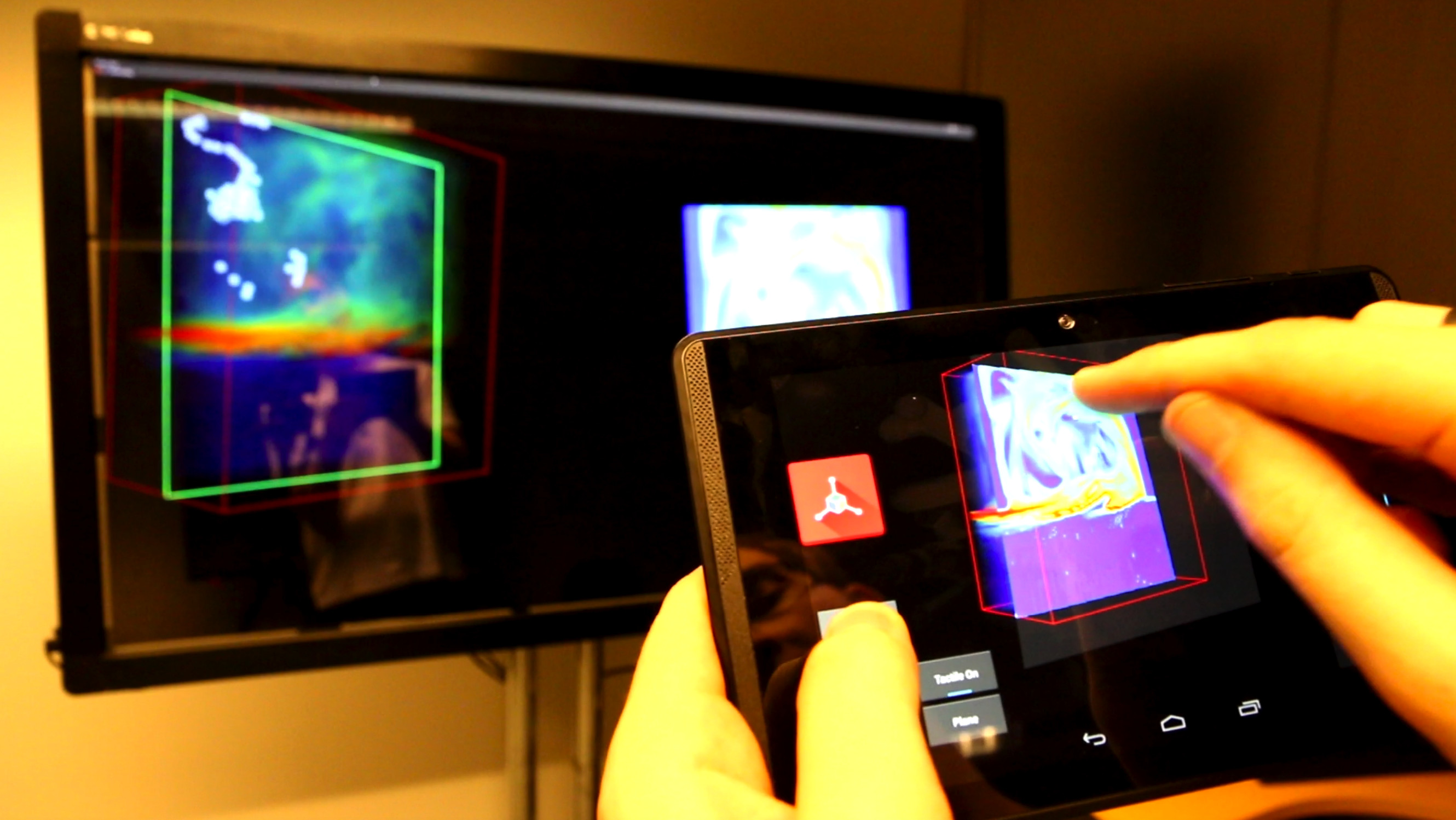

Mickael Sereno, Lonni Besançon, Tobias Isenberg |

"Point Specification in Collaborative Visualization for 3D Scalar Fields Using Augmented Reality" Virtual Reality, Springer Verlag, In press |

|

|

|

|

|

| 2022 |

Mickael Sereno, Xiyao Wang, Lonni Besançon, Michael Mcguffin, Tobias Isenberg |

"Collaborative Work in Augmented Reality: A Survey" IEEE Transactions on Visualization and Computer Graphics, Institute of Electrical and Electronics Engineers, In press |

|

|

|

|

|

| 2022 |

Olivier Pourret, Dasapta Erwin Irawan, Najmeh Shaghaei, Elenora M van Rijsingen, Lonni Besançon |

"Towards more inclusive metrics and open science to measure research assessment in Earth and natural sciences" Frontiers in Research Metrics and Analytics, In press |

|

|

|

|

|

| 2021 |

Gideon Meyerowitz-Katz, Lonni Besançon, Antoine Flahault, Raphael Wimmer |

"Impact of mobility reduction on COVID-19 mortality: absence of evidence might be due to methodological issues" Nature Scientific Reports |

|

|

|

|

|

| 2021 |

Ricardo Langner, Lonni Besançon, Christopher Collins, Tim Dwyer, Petra Isenberg, Tobias Isenberg, Bongshin Lee, Charles Perin, Christian Tominski |

"An Introduction to Mobile Data Visualization" Book Chapter in "Mobile Data Visualization" |

|

|

|

|

|

| 2021 |

Lonni Besançon, Wolfgang Aigner, Magdalena Boucher, Tim Dwyer, Tobias Isenberg |

"3D Mobile Data Visualization" Book Chapter in "Mobile Data Visualization" |

|

|

|

|

|

| 2021 |

Jim Smiley, Benjamin Lee, Siddhant Tandon, Maxime Cordeil, Lonni Besançon, Jarrod Knibbe, Bernhard Jenny, Tim Dwyer |

"The MADE-Axis: A Modular Actuated Device to Embody the Axis of a Data Dimension" Proc. ACM Hum.-Comput. Interact. 5, ISS, Article 501 (November 2021), 23 pages.  |

|

|

|

|

|

| 2021 |

Lonni Besançon, Nathan Peiffer-Smadja, Corentin Segalas, Haiting Jiang, Paola Masuzzo, Cooper Smout, Eric Billy, Maxime Deforet, Clémence Leyrat |

"Open science saves lives: lessons from the COVID-19 pandemic" BMC Medical Research Methodology 21, 117 (2021) |

|

|

|

|

|

| 2021 |

Jouni Helske, Satu Helske, Matthew Cooper, Anders Ynnerman, Lonni Besançon |

"Can visualization alleviate dichotomous thinking? Effects of visual representations on the cliff effect" IEEE Transactions on Visualization and Computer Graphics, vol. 27, no. 8, pp. 3397-3409, 1 Aug. 2021. |

|

|

|

|

|

| 2021 |

Lonni Besançon, Anastasia Bezerianos, Pierre Dragicevic, Petra Isenberg, Yvonne Jansen |

"Publishing Visualization Studies as Registered Reports: Expected Benefits and Researchers' Attitudes" Proceedings of the first alt.VIS workshop, IEEE VIS 2021. |

|

|

|

|

|

| 2021 |

Nicholas Spyrison, Benjamin Lee, Lonni Besançon |

"'Is IEEE VIS* that* good?' On key factors in the initial assessment of manuscript and venue quality" Proceedings of the first alt.VIS workshop, IEEE VIS 2021. |

|

|

|

|

|

| 2021 |

Lonni Besançon, Anders Ynnerman, Daniel Keefe, Lingyun Yu, Tobias Isenberg |

"The State of the Art of Spatial Interfaces for 3D Visualization" Computer Graphics Forum, Wiley, 2021, 40 (1), pp.293--326. |

|

|

|

|

|

| 2021 |

Moritz Hilscher, Hendrik Tjabben, Hendrik Rätz, Amir Semmo, Lonni Besançon, Jürgen Döllner, Matthias Trapp |

"Service-based Analysis and Abstraction for Content Moderation of Digital Images" Graphics Interface 2021, May 2021, Vancouver, Canada. |

|

|

|

|

|

| 2020 |

Lonni Besançon, Niklas Rönnberg, Jonas Löwgren, Jonathan P Tennant, Matthew Cooper |

"Open up: a survey on open and non-anonymized peer reviewing" Research Integrety and Peer Review 5, 8 (2020) |

|

|

|

|

|

| 2020 |

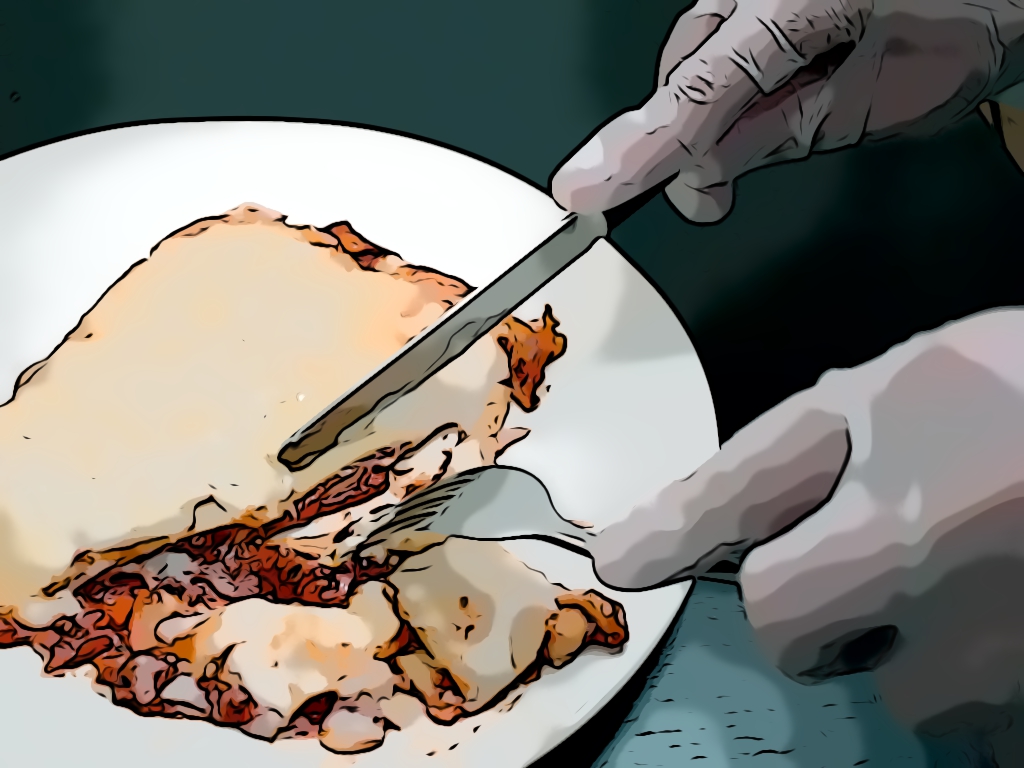

Lonni Besançon, Amir Semmo, David Biau, Bruno Frachet, Virginie Pineau, El Hadi Sariali, Marc Soubeyrand, Rabah Taouachi, Tobias Isenberg, Pierre Dragicevic |

"Reducing Affective Responses to Surgical Images and Videos Through Stylization" Computer Graphics Forum, Wiley, 2020, 39 (1), pp.462--483. |

|

|

|

|

|

| 2020 |

Xiyao Wang, David Rousseau, Lonni Besançon, Mickael Sereno, Mehdi Ammi, Tobias Isenberg |

"Towards an understanding of augmented reality extensions for existing 3D data analysis tools" CHI '20 - 38th SIGCHI conference on Human Factors in computing systems, Apr 2020, Honolulu, United States |

|

|

|

|

|

| 2020 |

Andy Cockburn, Pierre Dragicevic, Lonni Besançon, Carl Gutwin |

"Threats of a replication crisis in empirical computer science" Communications of the ACM, August 2020, Vol. 63 No. 8, Pages 70-79 |

|

|

|

|

|

| 2019 |

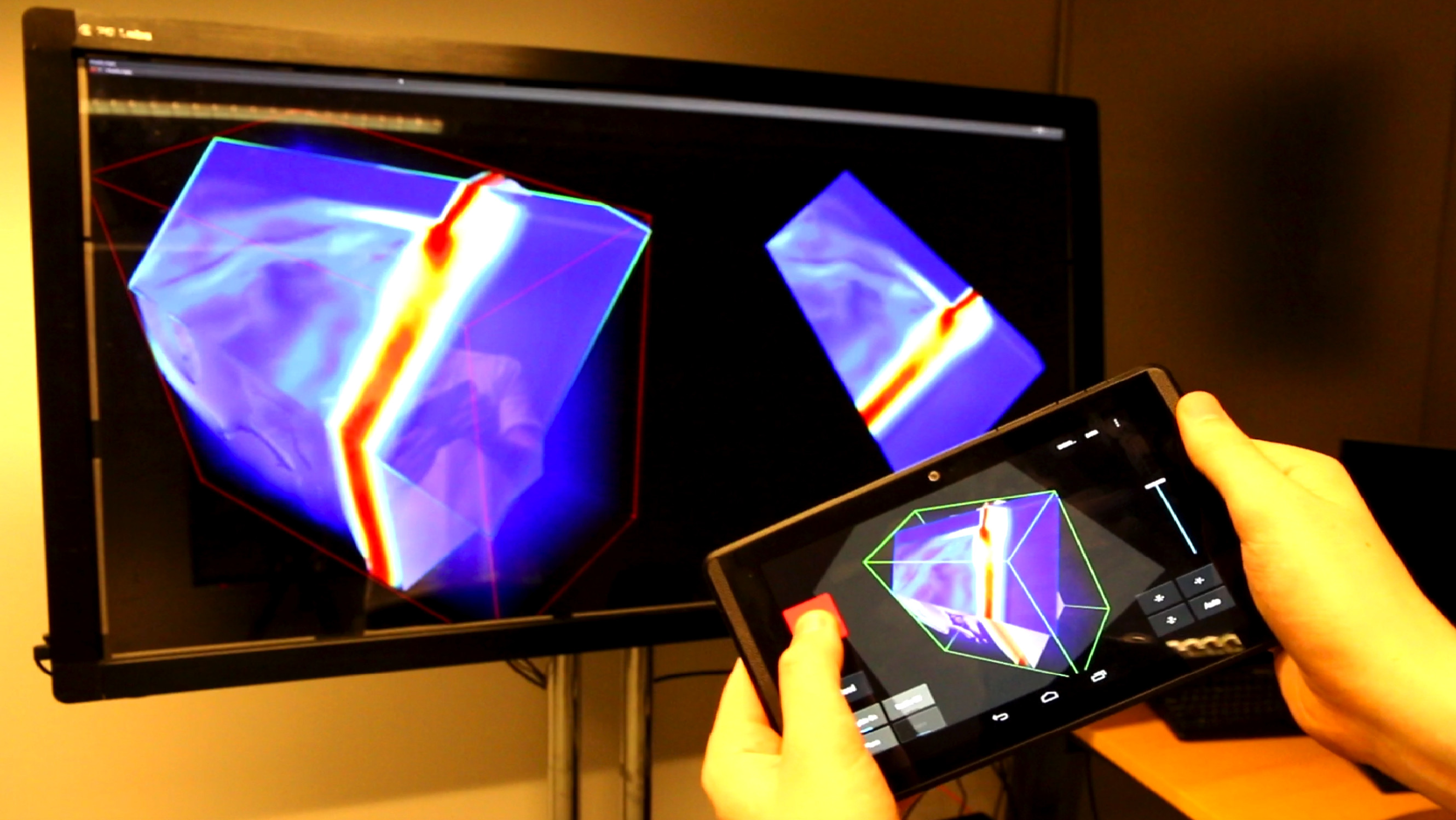

Lonni Besançon, Mickael Sereno, Lingyun Yu, Mehdi Ammi, Tobias Isenberg |

"Hybrid Touch/Tangible Spatial 3D Data Selection" Computer Graphics Forum, Wiley, In press, Eurographics Conference on Visualization (EuroVis 2019), 38. |

|

|

|

|

|

| 2019 |

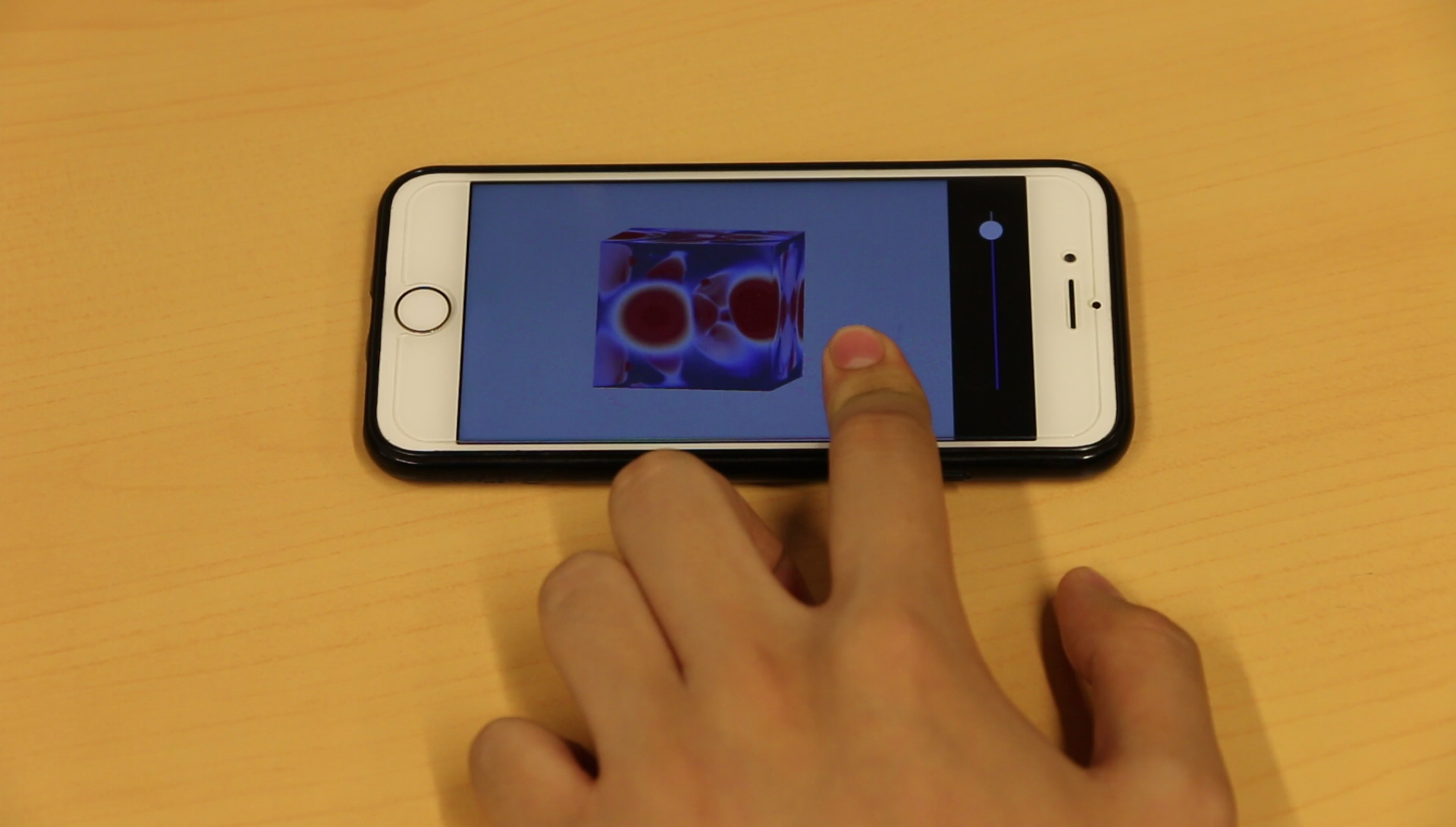

Xiyao Wang, Lonni Besançon, Mehdi Ammi, Tobias Isenberg |

"Augmenting Tactile 3D Data Navigation With Pressure Sensing" Computer Graphics Forum, Wiley, In press, Eurographics Conference on Visualization (EuroVis 2019), 38. |

|

|

|

|

|

| 2019 |

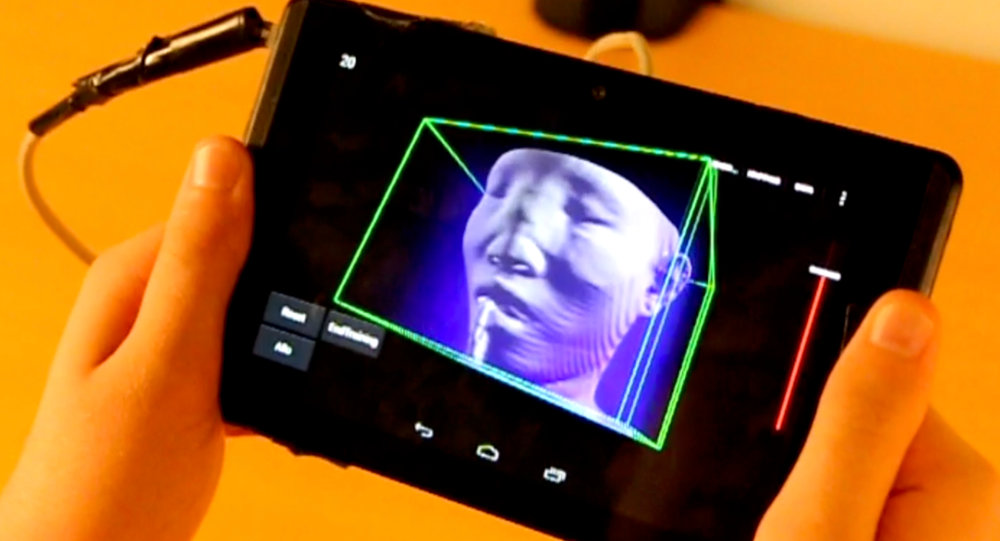

Mickael Sereno, Lonni Besançon, Tobias Isenberg |

"Supporting Volumetric Data Visualization and Analysis by Combining Augmented Reality Visuals with Multi-Touch Input" EuroVis Extended Abstracts, Jun 2019, Porto, Portugal. |

|

|

|

|

|

| 2019 |

Kahin Akram Hassan, Yu Liu, Lonni Besançon, Jimmy Johansson, and Niklas Rönnberg. |

"A Study on Visual Representations for Active PlantWall Data Analysis" In MDPI Data, 2019. |

|

|

|

|

|

| 2019 |

Jouni Helske, Matthew Cooper, Anders Ynnerman, Lonni Besançon |

"The Significant Effect of Visual Representations on Dichotomous Thinking" Journée Visu 2019, Agro Paris Tech, France, Mai 2019. |

|

|

|

|

|

| 2019 |

Lonni Besançon, Pierre Dragicevic |

"The Continued Prevalence of Dichotomous Inferences at CHI" ACM CHI 2019 (alt.chi). May 4 - 7, 2019, Glasgow, UK. |

|

|

|

|

|

| 2019 |

Tanja Blascheck, Lonni Besançon, Anastasia Bezerianos, Bongshin Lee, Petra Isenberg |

"Glanceable Visualization: Studies of Data Comparison Performance on Smartwatches" IEEE Transactions on Visualization and Computer Graphics, Institute of Electrical and Electronics Engineers, Jan. 2019 |

|

|

|

|

|

| 2018 |

Lonni Besançon, Amir Semmo, David Biau, Bruno Frachet, Virginie Pineau, El Hadi Sariali, Rabah Taouachi, Tobias Isenberg, Pierre Dragicevic |

"Reducing Affective Responses to Surgical Images through Color Manipulation and Stylization." Proceedings of the Joint Symposium on Computational Aesthetics, Sketch-Based Interfaces and Modeling, and Non-Photorealistic Animation and Rendering, Aug 2018, Victoria, Canada. pp.13. |

|

|

|

|

|

| 2018 |

Lonni Besançon |

"An Interaction Continuum for 3D Dataset Visualization.", PhD Thesis, 2018.  |

|

|

|

|

|

| 2018 |

Tanja Blascheck, Anastasia Bezerianos, Lonni Besançon, Bongshin Lee, Petra Isenberg |

"Preparing for Perceptual Studies: Position and Orientation of Wrist-worn Smartwatches for Reading Tasks.", Workshop on Data Visualization on Mobile Devices, ACM CHI, 2018, Montréal, Canada |

|

|

|

|

|

| 2017 |

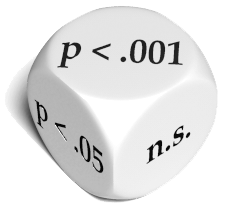

Lonni Besançon, Pierre Dragicevic |

"La Différence Significative entre Valeurs p et Intervalles de Confiance", 29EME conférence francophone sur l'Interaction Homme-Machine, Aout 2017, Poitiers, France. |

|

|

|

|

|

| 2017 |

Xiyao Wang, Lonni Besançon, Mehdi Ammi, Tobias Isenberg |

"Augmenting Tactile 3D Data Exploration With Pressure Sensing", IEEE VIS 2017, Oct 2017, Phoenix, Arizona, United States. 2017 |

|

|

|

|

|

| 2017 |

Lonni Besançon, Paul Issartel, Mehdi Ammi, Tobias Isenberg |

"Interactive 3D Data Exploration Using Hybrid Tactile/Tangible Input", Journées Visu 2017, Jun 2017, Rueil-Malmaison, France |

|

|

|

|

|

| 2017 |

Lonni Besançon, Mehdi Ammi, Tobias Isenberg |

"Pressure-Based Gain Factor Control for Mobile 3D Interaction using Locally-Coupled Devices", CHI 2017 - ACM CHI Conference on Human Factors in Computing Systems, May 2017, Denver, United States. pp.1831-1842.  |

|

|

|

|

|

| 2017 |

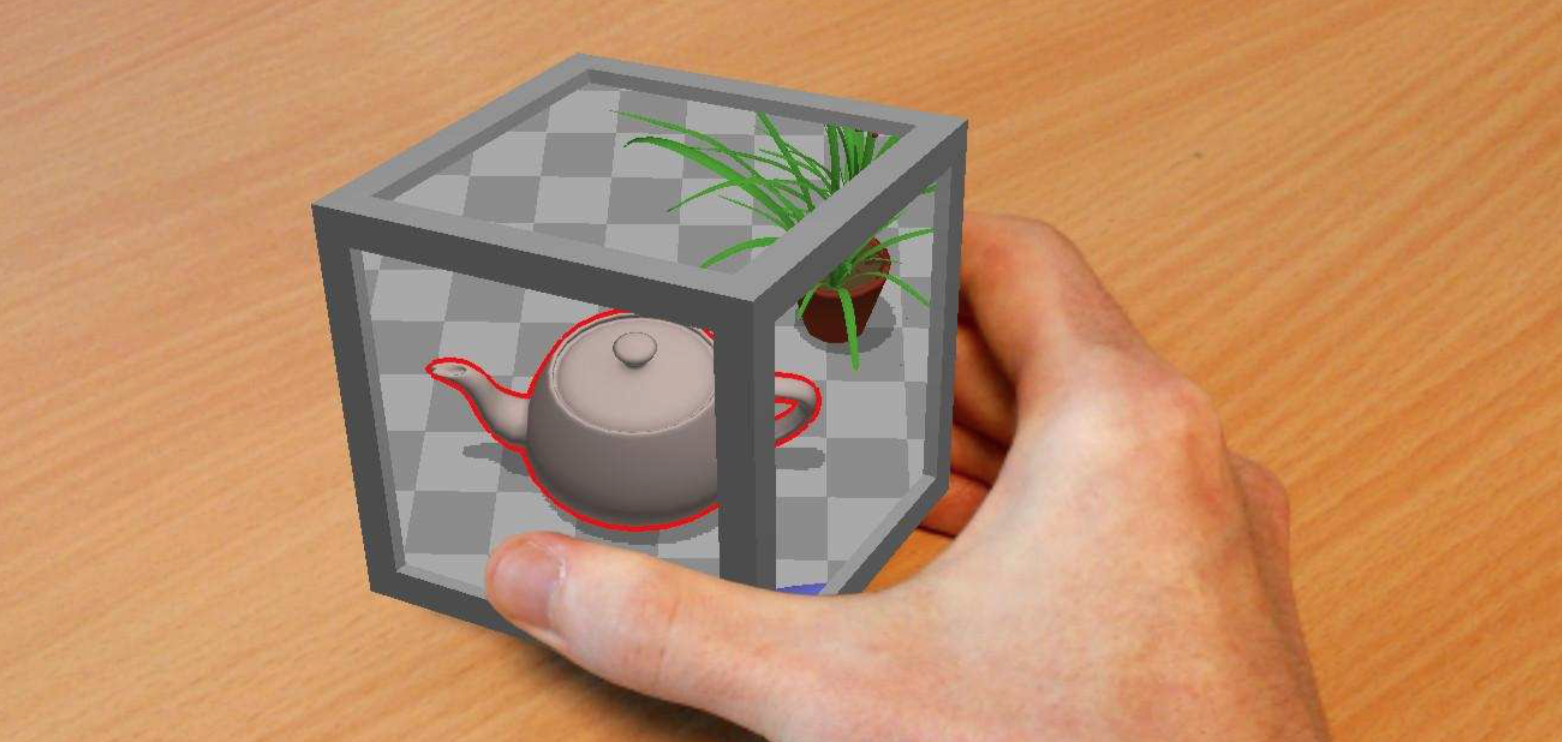

Lonni Besançon, Paul Issartel, Mehdi Ammi, Tobias Isenberg |

"Usability Comparison of Mouse, Touch and Tangible Inputs for 3D Data Manipulation", CHI 2017 - ACM CHI Conference on Human Factors in Computing Systems, May 2017, Denver, United States. pp.4727-4740 |

|

|

|

|

|

| 2016 |

Mickael Sereno, Mehdi Ammi, Tobias Isenberg, Lonni Besançon |

Tangible Brush: Tactile-Tangible Hybrid 3D Selection, Extended Abstract IEEE VIS, October 23–28, Baltimore, Maryland, USA, 2016. |

|

|

|

|

|

| 2016 |

Paul Issartel, Lonni Besançon, F. Guéniat, T. Isenberg, M. Ammi |

Preference Between Allocentric and Egocentric 3D Manipulation in a Locally Coupled Configuration. ACM 4th Symposium on Spatial User Interaction (SUI) |

|

|

|

|

|

| 2016 |

Lonni Besançon, Paul Issartel, M. Ammi, T. Isenberg |

"Hybrid Tactile/Tangible Interaction for 3D Data Exploration. IEEE Transactions on Visualization and Computer Graphics, 23(1), January 2017. (10 pages) |

|

|

|

|

|

| 2016 |

Paul Issartel, L. Besançon, Tobias Isenberg, Mehdi Ammi |

"A Tangible Volume for Portable 3D Interaction", International Symposium on Mixed and Augmented Reality (ISMAR), 2016 |

|

|

|

|

|

I was supervised by Mehdi Ammi and Tobias Isenberg and worked on the building of

I was supervised by Mehdi Ammi and Tobias Isenberg and worked on the building of  I had the opportunity to study one year at the University of Hong (HKU) thanks to an exchange program. I attended classes at the faculty of engineering as well as the faculty of computer science. I did not get a diploma from the University of Hong Kong as it was not included in the exchange program.

List of courses followed:

I had the opportunity to study one year at the University of Hong (HKU) thanks to an exchange program. I attended classes at the faculty of engineering as well as the faculty of computer science. I did not get a diploma from the University of Hong Kong as it was not included in the exchange program.

List of courses followed:

I got my Master of Engineering diploma in 2014 after three years of study at Polytech Paris Sud where I specialized as a Computer Science Engineer

I got my Master of Engineering diploma in 2014 after three years of study at Polytech Paris Sud where I specialized as a Computer Science Engineer

Design, Implementation and Evaluation on driving simulateur of the interface of semi-autonomous cars.

Design, Implementation and Evaluation on driving simulateur of the interface of semi-autonomous cars.