Hybrid Tactile/Tangible Interaction for 3D Data Exploration

Description:

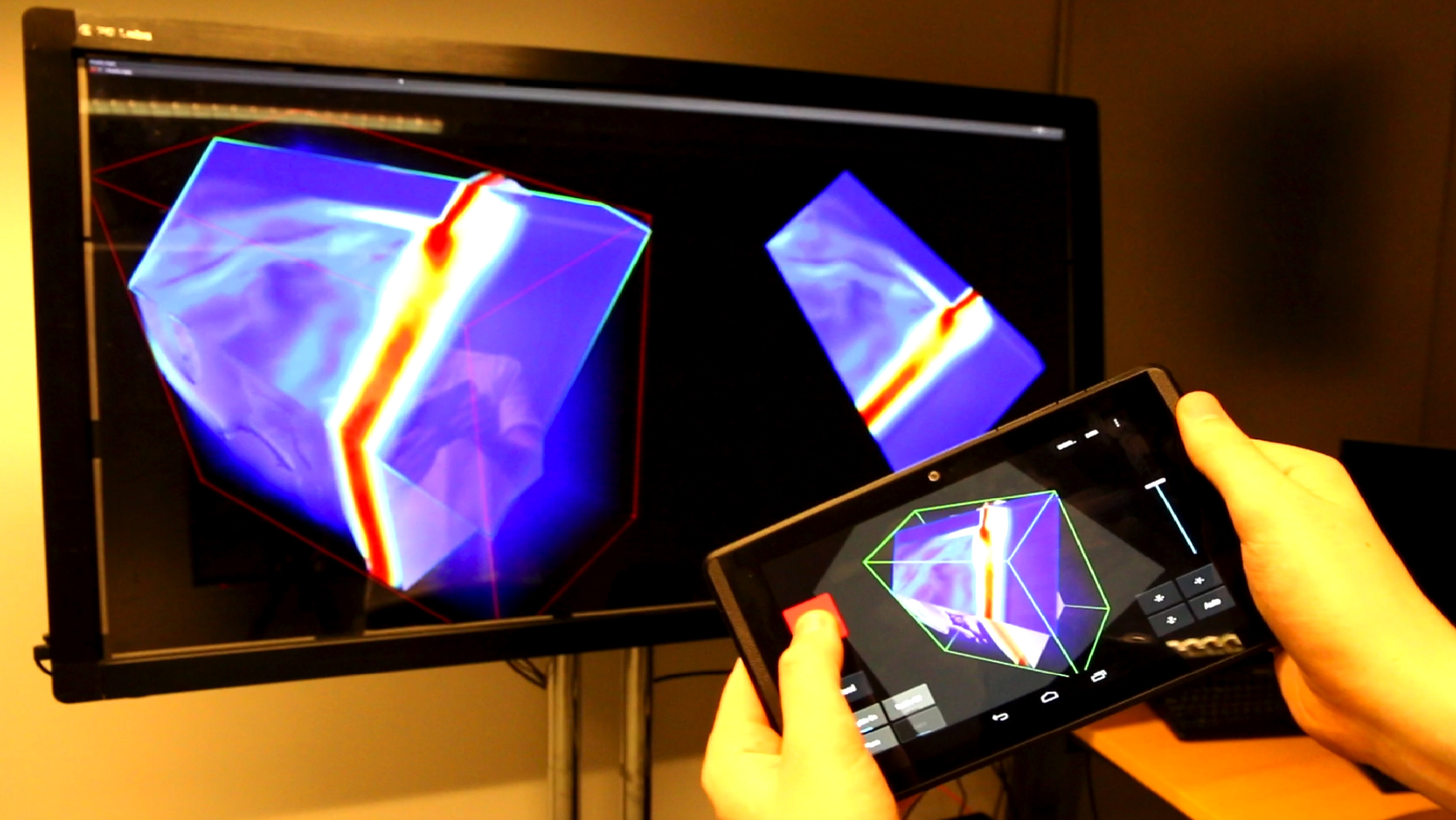

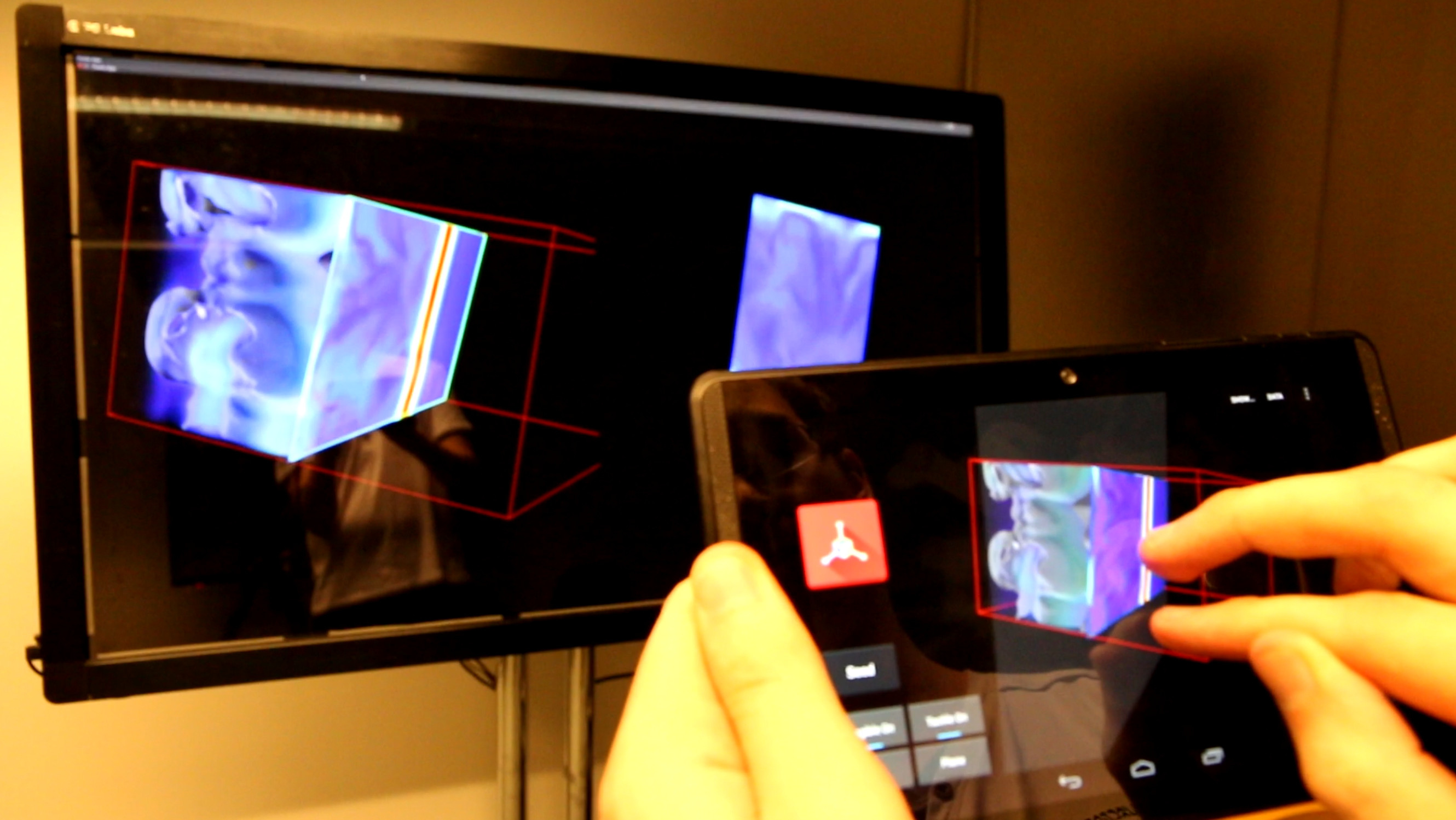

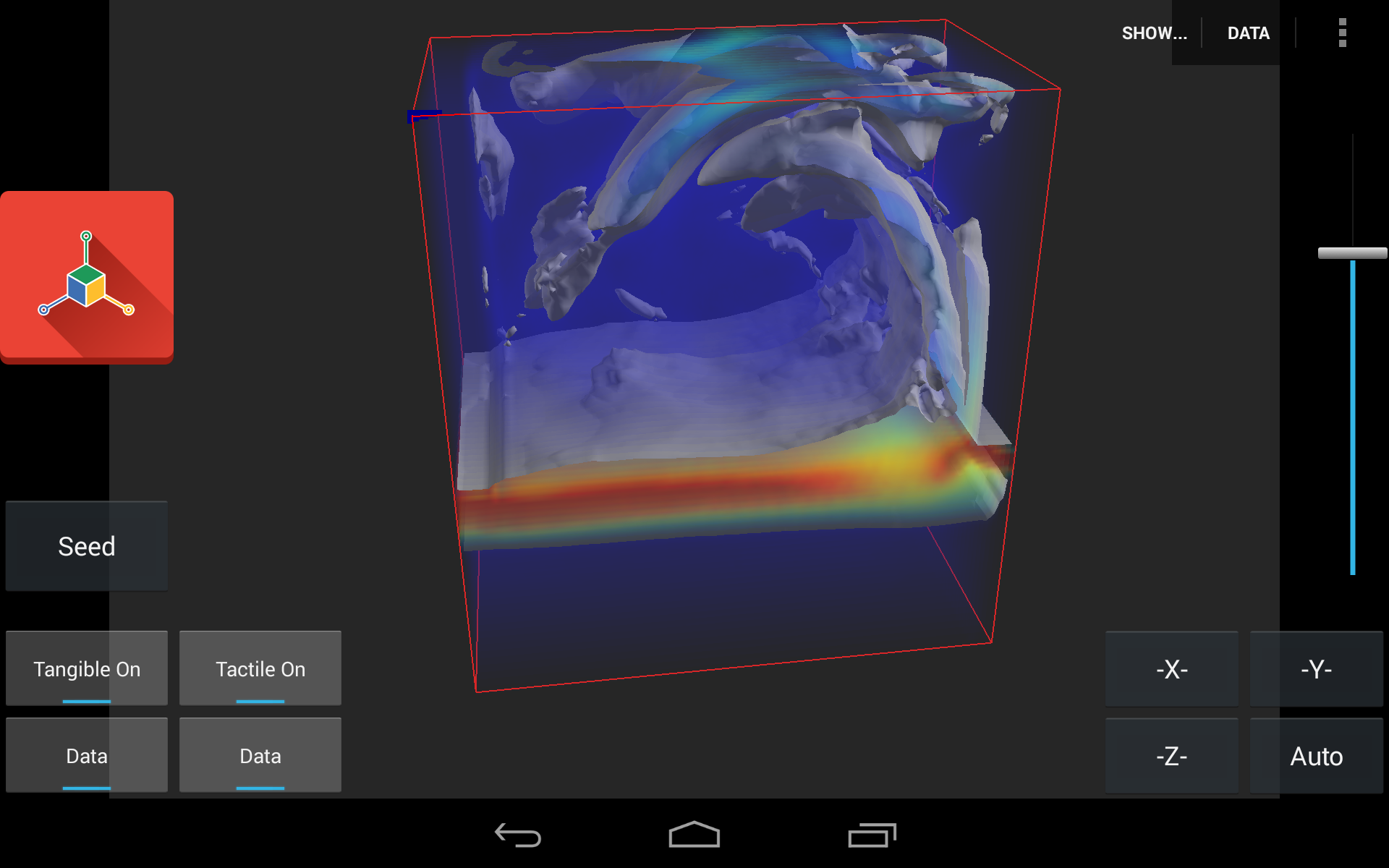

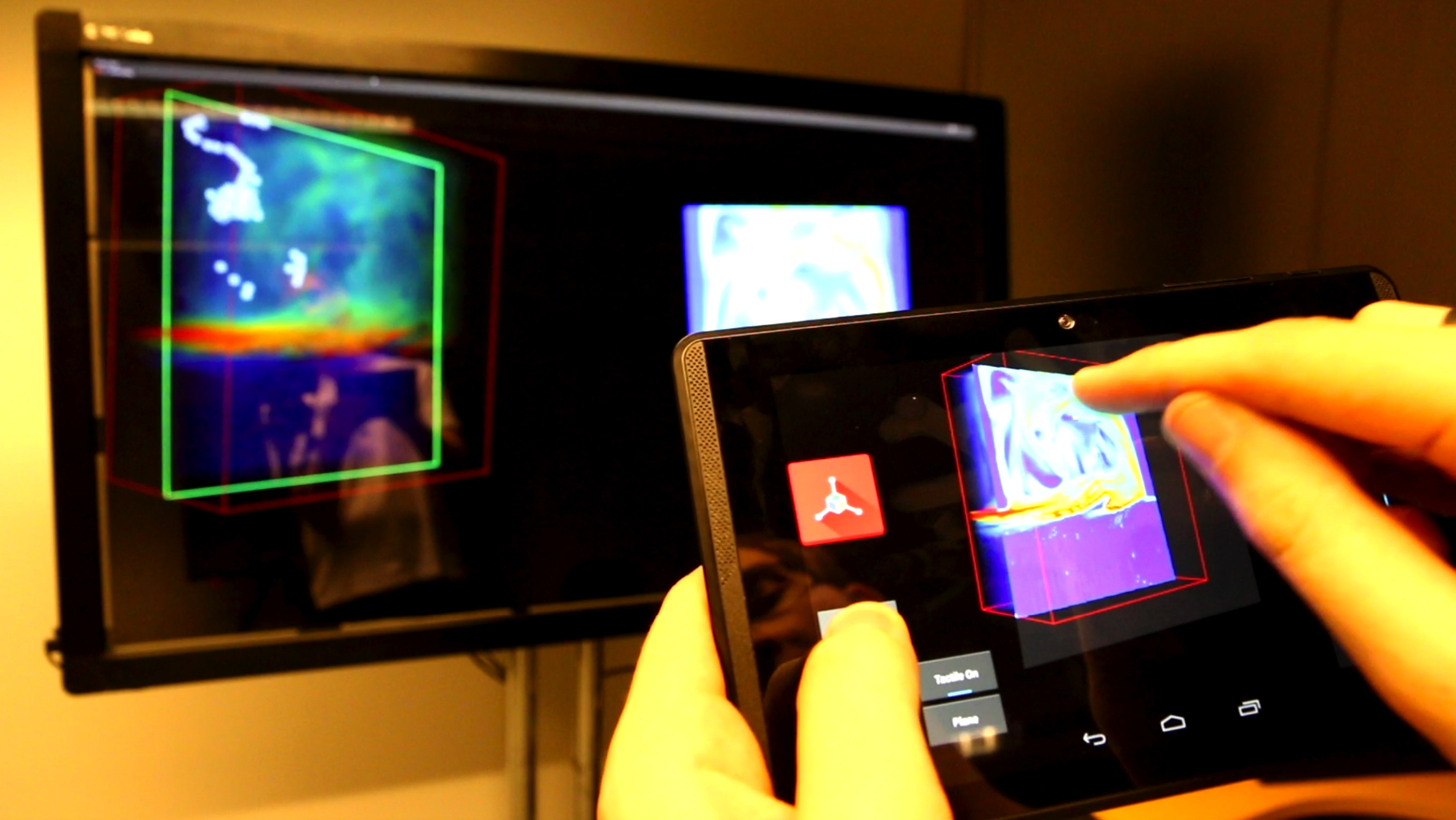

We present the design and evaluation of an interface that combines tactile and tangible paradigms for 3D visualization. While studies have demonstrated that both tactile and tangible input can be efficient for a subset of 3D manipulation tasks, we reflect here on the possibility to combine the two complementary input types. Based on a field study and follow-up interviews, we present a conceptual framework of the use of these different interaction modalities for visualization both separately and combined---focusing on free exploration as well as precise control. We present a prototypical application of a subset of these combined mappings for fluid dynamics data visualization using a portable, position-aware device which offers both tactile input and tangible sensing. We evaluate our approach with domain experts and report on their qualitative feedback.

Authors

Lonni Besançon

Paul Issartel

Mehdi Ammi

Tobias Isenberg

Reference:

Lonni Besançon, Paul Issartel, Mehdi Ammi, and Tobias Isenberg (2017) Hybrid Tactile/Tangible Interaction for 3D Data Exploration. IEEE Transactions on Visualization and Computer Graphics, 23(1), January 2017. To appear.

Get the code!

We have implemented the code with two different parts. The code running on a seperate Unix Machine for rendering-only options and the code handling all the interaction on the tablet side. You can get the code on Github with the following links: Here for the Unix code. Here for the tablet code. (To run, needs a Google Tango Tablet).